A student preparing for the Multistate Bar Exam could liken the exam to posing 200 Rubik’s cube-type questions—okay, they’re not as hard as solving really hard puzzles–but examinees may sometimes feel that the MBE can be that difficult.

A student preparing for the Multistate Bar Exam could liken the exam to posing 200 Rubik’s cube-type questions—okay, they’re not as hard as solving really hard puzzles–but examinees may sometimes feel that the MBE can be that difficult.

Bonner and D’Agostino (2012) in their research study on the Multistate Bar Exam (MBE) test the test by asking:

• How important is a test-taker’s knowledge of solving legal problems to performance on the MBE?

• To what degree is performance on the MBE dependent on general knowledge, which doesn’t have anything to do with the law or legal reasoning? For instance, are test-wise strategies and common-sense reasoning important to doing well on the MBE?

Background

With thousands of examinees taking this exam annually since 1972, you would think that the answer is a resounding yes to the first and a tepid yes to the second. Why else would most of the states rely on a test if it were not proved valid? But surprisingly, there hasn’t been any published evidence that this high-stake exam does in fact “assess the extent to which an examinee can apply fundamental legal principles and legal reasoning to analyze a given pattern of facts” as one article characterized the MBE — in other words, the MBE tests skills in legal problem solving.

Establishing test validity is the worth of the any test; validity is not an all-purpose quality, for it only has reference to its purpose. Establishing test validity is not uncommon in other fields such as medical licensure, where there are studies establishing validity of medical clinical competence , internal medicine in training exam, and the family physician’s examination.

A finding that common sense reasoning and general test-wise strategies are important to MBE performance would in fact indicate that the test lacks validity as a measure of legal problem solving. There is indirect support that the general common sense and test-wise strategies aren’t important. In his review of research Klein (1993) refers to a study in which law students outperformed college students, untrained in the law, on the MBE. But we can’t conclude that common sense and test-wise strategies are critical for doing well on the MBE since maturation effects (just being older and smarter as a result) not legal training, cannot be ruled out as a reason for the superior performance of law students.

The Study

In devising a study to measure validity (construct and criterion) of the MBE, Bonner et al., (2012) drew on the very large body of research about novice-expert differences in problem solving. This research looks at the continuum of expertise suggested by these extremities and the spaces in between. Expert-novice studies compare how people with different levels of experience in a particular field of endeavor go about solving problems in that field. By doing this, scientists hope to see what beginners and intermediates need to do to go to the next level. Cognitive scientists have looked at these differences in many different areas—mathematics, physics, chess playing and medical diagnosis—but legal problem solving hasn’t got a lot of attention.

So what does this research tell us? Experts draw on a wealth of substantive knowledge to solve a problem in their area of expertise. They know more about the field and have a deeper understanding of its subtleties. An expert’s knowledge is organized into complex schemas (abstract mental structures) that allow experts to quickly hone in on the relevant information. Their knowledge isn’t limited to the substance of the area (called declarative knowledge); experts have better executive control of the processes they go about solving a problem. They have better “software” that allow them to use subroutines that weed out good and bad paths to a solution. In his article on legal reasoning skills, Krieger (2006) found that legal experts engage in “forward-looking processing.”

By contrast, novices and intermediates are rookies of varying degrees of experience in the domain in question. Intermediates are a little better than novices because they have more knowledge of the substance of a field, but their knowledge structures—schemas—aren’t as complex or accurate. Intermediates, though, are less rapid in weeding out wrong solutions and less efficient in honing in on the right set of possible solutions.

Bonner et al., classified law graduates as intermediates and devised a study to peer into the processes involved in answering MBEs. They anticipated that law school graduates needed an amalgam of general as well as domain-specific reasoning skills and thinking-about-thinking (metacognitive) skills to do well on the MBE.

Bonner used a “think-aloud” procedure –online verbalizations made during the very act of answering an MBE question– to peer into the students’ mental processes. A transcript of the verbalizations was then laboriously coded into types which, in turn fell into broader categories of legal reasoning (classifying issues, using legal principles and drawing early conclusions.), general problem solving (referencing facts and using common sense), and metacognitive (statements noting self-awareness of learning acts). The data was then evaluated with statistical procedures to see which of the behaviors were associated with choosing the correct answer on MBE questions.

Findings

Here are the results of Bonner’s and D’Agostino’s study:

• Using legal principles had a strong positive correlation (r= .66) with performance. When students organized decisions by checking all options and marked the ones of that were most relevant and irrelevant, they were more likely to use the correct legal principles.

• Using common sense and test-wise strategies had a negative, but not significant, correlation with performance. In fact test strategizing had a negative relationship with performance. Using “deductive elimination, guessing, and seeking test clues was associated with low performance on the selected items.”

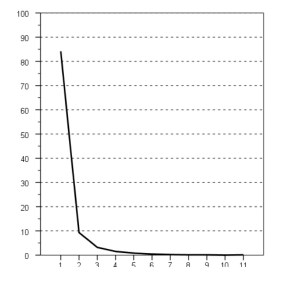

• Among the metacognitive skills, organizing (reading through and methodically going through all of options) had a strong positive correlation (r= .74) with performance. (Note to understand the meaning of a correlation, square the r and the resulting number is the degree to which the variation in one variable is explained by the other; in this example, 55% of the variation in MBE performance is accounted for by organizing.) When students are self-aware and monitor what they are doing, they increase their chances for picking the right answers.

The Takeaway

The bottom line is that the MBE does possess relevance to the construct of legal problem solving –at least with respect to the questions on which the think-aloud was performed. In short, Bonner et al., demonstrate that the MBE has validity for the purpose of testing legal problem solving.

Unsurprisingly, using the correct legal principle was correlated strongly with picking the right answer. This means a thorough understanding and recall of the legal principles are all critical to performance. That puts a premium not only on knowing the legal doctrine well, but exposing yourself to as many different fact patterns will help students to spot and instantiate the legal rules to the new facts. Analogical reasoning research says that the more diverse exposure to applications of the principles should help trigger a student’s memory and retrieval of the appropriate analogs that match the fact pattern of the question. Students should take a credit bearing bar related review course, and they should take this course seriously.

If you want to do well on the MBE, don’t jump to conclusions and pick what seems to be the first right answer. The first seemingly right answer could be a distractor, designed to trick impulsive test-takers. Because of the time constraints, examinees are especially susceptible to pick the first seemingly right answer. But the MBE punishes impulsivity and rewards thoroughness– so examinees should go through all the possible choices and mark the good and wrong choices. They shouldn’t waste their time with test-wise strategies of eliminating choices based on pure common sense or deduction that has no reference to legal knowledge and looking for clues in the stem of the question. Remember, test-wise strategies unconnected with legal knowledge had a negative relationship to MBE performance.

Although errors in facts that result from poor comprehension were not prevalent among the participants in Bonner and D’Agostino’s study, that doesn’t mean that good reading comprehension is unimportant. If a student has trouble with reading comprehension, the student should start the practice of more active engagement with fact patterns and answer options as Elkin suggests in his guide.

Finally, more training in metacognitive skills should improve performance. Metacognition, which is often confused with cognitive skills such as study strategies, is practiced self-awareness. As Bonner and D’Agostino, “practice in self-monitoring, reviewing, and organizing decisions may help test-takers allocate resources effectively and avoid drawing conclusions without verification or reference to complete information.”